Google's tips for reducing AI training emissions

David Patterson wrote a blog post for Google’s The Keyword blog on how the company is minimizing AI’s carbon footprint, mostly covering his new paper on the topic: Carbon Emissions and Large Neural Network Training (Patterson et al. 2021). The paper went live on arXiv just half a week ago, but coming in at 22 data-dense pages, I think it’ll become a key piece of literature for sustainable AI. My two main takeaways from the paper were: (1) retroactively estimating AI training emissions is difficult, so researchers should measure it during model development; and (2) where, when and on what hardware models are trained can make an enormous difference in emissions.

Emissions estimates

Patterson et al. calculate the carbon footprint of several recent gargantuan models (T5, Meena, GPT-3, etc.) more precisely than previous work, which they found to be off by up to two orders of magnitude in some cases: the previous estimate for The Evolved Transformer‘s Neural Architecture Search (NAS), for example, was 88 times too high (see Appendix D). This shows that, without knowing the exact datacenter, hardware, search algorithm choices, etc., it’s pretty much impossible to accurately estimate how much CO2 was emitted while training a model.

Because of this, one of the authors’ recommendations is for the machine learning community to include CO2 emissions estimates as a standard metric in papers: a measurement by the people training the models, who have much better access to all relevant information (see e.g. Table 4 in the paper and the Google Cloud page on their different datacenters’ carbon intensities), will always be more accurate than a retroactive estimate by another researcher. If conferences and journals start requiring emissions metrics in paper submissions and include them in acceptance criteria, it’ll encourage individual researchers and AI labs to take steps to reduce their emissions.

(As an aside, this is an interesting comparison that makes “tons of CO2-equivalent greenhouse gas emissions” a bit easier to think about: a whole passenger jet round trip flight between San Francisco and New York emits about 180 tons of CO2e; relative to that, “T5 training emissions are ~26%, Meena is 53%, Gshard-600B is ~2%, Switch Transformer is 32%, and GPT-3 is ~305% of such a round trip.” Puts it all in perspective quite well.)

Emissions reductions

Patterson et al. also have some specific recommendations for reducing the CO2 emissions caused by training AI models:

- Large but sparsely activated DNNs can consume <1/10th the energy of large, dense DNNs without sacrificing accuracy despite using as many or even more parameters.

- Geographic location matters for ML workload scheduling since the fraction of carbon-free energy and resulting CO2e vary ~5X-10X, even within the same country and the same organization.

- Specific datacenter infrastructure matters, as Cloud datacenters can be ~1.4-2X more energy efficient than typical datacenters, and the ML-oriented accelerators inside them can be ~2-5X more effective than off-the-shelf systems.

Adding all these up, “remarkably, the choice of DNN, datacenter, and processor can reduce the carbon footprint up to ~100-1000X.” Two to three orders of magnitude! Since this research happened inside Google, its teams are now already optimizing where and when large models are trained to take advantage of these ideas. Another cool aspect of the paper is that each of the four specific focus points for reducing emissions (improvements in algorithms, processors, datacenters, and the grid’s energy mix) is accompanied by a business rationale for implementing it as a cloud provider — I’m guessing the researchers also used some of these arguments to push for change internally at Google. (Maybe, as a next step, they can also look into ramping model training based on signals from the intraday electricity market?)

It’s great to see a paper on AI sustainability with so much measured data and actionable advice. I haven’t seen it going around much yet on Twitter, but I hope it’s read widely — here’s the PDF link again; the fallacy debunks in section 4.5 (page 12) are an interesting bit I haven’t summarized above, so give it a click! I also hope that the paper’s recommendations are implemented: even just the relatively low-effort change of shifting our training workloads to different datacenters can already make a big difference. And of course it’ll be interesting to see if there are any specific critiques on the paper’s emissions measurement methodology, since of course this is all still just a preprint and half the paper’s authors work at Google.

The climate opportunity of gargantuan AI models

Climate change and the energy transition

Climate change is our generation’s biggest challenge, and the transitions needed to reduce emissions and prevent it from becoming catastrophic will affect almost every part of society in the coming decades. On their excellent Our World in Data page on CO2 and Greenhouse Gas Emissions, Hannah Ritchie and Max Roser write:

To make progress in reducing greenhouse gas emissions, there are two fundamental areas we need to focus on: energy (this encapsulates electricity, heat, transport, and industrial activities) and food and agriculture (which includes agriculture and land use change, since agriculture dominates global land use).

The biggest of these is energy: it’s responsible for almost three quarters of global greenhouse gas emissions. Decarbonizing energy involves two parallel transitions: (1) electrifying sectors powered by fossil fuels, and (2) shifting our electricity generation to low-emissions sources like solar, wind, hydro and nuclear.

Take road transport for example: cars need to be powered by electricity, and that electricity needs to be green. Replacing all internal combustion engine cars with electric ones will take at least two decades, as will replacing all gas- and coal-fired electricity plants with low-carbon power generation — so if we want to be climate-neutral by 2050, neither transition can wait for the other. Road transport is responsible for about 12% of overall emissions, but the same dual transition applies for other energy-intensive sectors like iron and steel production (7%) or lighting and heating in buildings (17.5%).

That’s the energy transition in a nutshell: we need to move energy demand towards electricity, and the electricity supply toward low-carbon sources.

But there’s one less-discussed thing linking these two: how do we move this low-carbon electricity from the supply to the demand? That’s where electrical grids — the focus of this post — come in.

A quick primer on electrical grids

Let’s start with a short, hopefully not too technical, primer on electrical grids — they play a huge role in all our lives, but I personally didn’t really know how they worked until I started working at a renewables optimization software company in January. On the most basic level, grids are very large systems — all of Europe is a single grid, and North America is divided into an eastern and a western grid (plus the smaller Texas and Quebec grids) — consisting of power lines at different voltages (high for long-distance transmission, low for local distribution), electrical substations which step voltage up or down, and electricity producers and consumers.

As opposed to the direct current (DC) in, for example, a battery-LED system — where electrons flow from one pole of the battery through the LED to the other pole — electricity in grids is in the form of alternating current (AC) — where electrons oscillate back and forth on the power line tens of times a second: at 50 Hz (times a second) in Europe and 60 Hz in North America. One of the main jobs of a grid operator is to ensure that this frequency remains constant, because lots of stuff breaks if it is too far from nominal, which can cause grid-wide blackouts in the worst case. An oversupply of electricity (more generation than consumption) causes the frequency to increase, while an undersupply causes it to decrease; so the operator has to make sure that generation and consumption are equal at all times.

Electricity markets and renewable generation

Grid operators keep electricity generation and consumption equal by creating time-based markets for electricity. For this discussion, the day-ahead and intraday markets are the most important. On the day-ahead market, producers and consumers place bids to sell and buy the amount of electricity they want to produce or consume during each hour of the following day. At the end of the day, the operator settles these bids in an optimal way that ensures that, for each hour, the amount sold matches the amount bought. Problem solved, right?

Sadly, as anyone who has ever been outside knows, the weather (and other factors affecting generation and consumption) can’t be perfectly predicted down to the hour a whole day ahead. It could happen, for example, that the afternoon is less sunny than expected, which means that a solar farm will produce less electricity than it sold the previous day. This is where the intraday market — operating on 15-minute intervals instead of hour-long ones — comes in. In this scenario of an expected underproduction, the solar farm can go to the intraday market and place bids to buy the difference between the amount of electricity it sold on the day-ahead market and the amount it’ll actually produce, from someone who is willing to either consume less power than they bought or produce more power than they already sold.

In practice, it’s usually the latter: someone will jump in and produce the extra electricity. This is big business for coal and gas plants, because they can ramp their production up (or down if the scenario is reversed) on-demand, and very quickly. As a larger percentage of electricity on the grid is generated using weather-dependent renewables, this intraday market becomes more valuable — and coal and gas-burning plants can be operated profitably for longer, even as learning effects make wind and solar power cheaper and CO2 emission prices rise.

Beyond fossil fuel-burning power plants ramping their generation up and down to meet consumption, another obvious supplier of flexibility is large batteries. These can be paid to charge when there is an oversupply, and paid again to discharge when there is an undersupply. Another plausible demand-side response comes from climate-controlled (food) distribution centers that need to run their cooling units a number of hours a day, but can be a bit flexible about exactly when those hours are. These are both useful, but they’re not happening at scale (yet).

So it’d be great for the planet if these coal and gas plants had some more competition on the intraday electricity balancing market.

(Any imbalance that is not solved on the day-ahead and intraday markets is handled by the grid operator’s balancing reserves; I won’t go into the details of these FCRs and FRRs here.)

Datacenters and flexible AI training for demand-side response

This is — finally — where datacenters and AI models come in. Here in The Netherlands, there has been some controversy in recent months about how many datacenters are being built (I bike by this imposing-looking one in Amsterdam several times a week) and how much energy they use. But given the above, I actually think datacenters have the potential to play a positive role in the intraday electricity market. Although many tasks of a datacenter, like serving websites, facilitating video calls, or powering Netflix streams, can’t really be shifted around in time at will, AI-related tasks often can be — both in research and production.

In a research setting, gargantuan AI models like DeepMind’s AlphaFold 2 can often take several days or weeks to train on dozens, hundreds or thousands of powerful machines. And labs like OpenAI already use highly-customized versions of tools like Kubernetes to orchestrate these machines. It’s not a stretch to imagine that these tools can be extended to ramp training up or down (in terms of the number of active machines, for example), along with the intraday electricity market. (In fact, I tried building a little tool similar to this myself last year!)

In production settings, machine learning models are often retrained periodically, once for a whole service or even many times for individual (groups of) users. This doesn’t happen exactly when the user queries or interacts with the model, but rather in an “offline” way: training happens on some schedule, and the model is saved to be retrieved for inference whenever the user wants to query it — so there’s potential for flexibility there. Even inference can happen offline: things like tagging photo libraries with the objects present in the photos are not too time-sensitive, and can probably happen flexibly within some period after the photos are uploaded without impacting user experience too much. It’s also not too crazy to imagine syncing this up to the electricity market.

Luckily, I’m not the first person to come up with this idea — see the Boden Tech datacenter in Sweden and Google’s partnership with Electricity Map, for example — but I do think that it’s under-appreciated, and often missed in discussions about Green AI and the climate risks of large AI models. Since these big models can often be scheduled to be trained at any time, perhaps counterintuitively, the more power they use, the more flexibility they can offer to the grid — and the more they can out-compete fossil fuel plants on the intraday electricity market!

I think we have a better shot at getting big tech companies and AI labs to implement ideas like this at scale, than we do at getting them to stop training big AI models. So instead of looking at gargantuan AI (language) models only as a climate problem, let’s give some more attention to their potential as a climate solution.

Same.Energy, a visual search engine

Same.Energy visual search

same.energy is a deep learning-powered visual search engine built by Jason Jackson (the creator of TabNine, see DT #18), who says it works similarly to OpenAI’s CLIP. It’s a really nice website to just browse around for a while and get lost in, which is also exactly what it’s meant for. Jackson, on the site’s about page, says:

We believe that image search should be visual, using only a minimum of words. And we believe it should integrate a rich visual understanding, capturing the artistic style and overall mood of an image, not just the objects in it. We hope Same Energy will help you discover new styles, and perhaps use them as inspiration.“

This may remind you of Google’s similar image search, but I found that same.energy does a much better job of finding diverse results: where Google will usually show me different crops and resolutions of the same photo or different photos of the same object, same.energy manages to capture the “feel” of an image and show more (different!) images with that same “feel.” Some of my favorite searches so far are __blue geometric patterns, glass art, and rusty surfaces.

The AI Incident Database

The Discover app of the AI Incident Database

The Partnership on AI to Benefit People and Society (PAI) is an international coalition of organizations with the mision “to shape best practices, research, and public dialogue about AI’s benefits for people and society.” Its 100+ member organizations cover a broad range of interests and include leading AI research labs (DeepMind, OpenAI); several universities (MIT, Cornell); most big tech companies (Google, Apple, Facebook, Amazon, Microsoft); news media (NYT, BBC); and humanitarian organizations (Unicef, ACLU).

PAI recently launched a new project: the AI Incident Database . The AIID mimics the FAA’s airplane accidents database and is similarly meant to help “future researchers and developers avoid repeated bad outcomes.” It’s launching with a set of 93 incidents, including an autonomous car that killed a pedestrian, a trading algorithm that caused a flash crash, and a facial recognition system that caused an innocent person to be arrested (see DT #43). For each incident, the database includes a set of news articles that reported about it: there are over 1,000 reports in the AIID so far. It’s also open source on GitHub, at PartnershipOnAI/aiid.

Systems like this (and Amsterdam’s AI registry, for example) are a clear sign that productized AI is a quickly starting to mature as a field, and that lots of good work is being done to manage its impact. Most importantly, I hope these projects will help us have more sensible discussions about regulating AI. Benedict Evans’ essays Notes on AI Bias and Face recognition and AI ethics are excellent reads on this; he compares calls to “regulate AI” to wanting to regulate databases — it’s not the right level of abstraction, and we should be thinking about specific policies to address specific problems instead. A dataset of categorized AI incidents, managed by a broad coalition of organizations, sounds like a great step in this direction.

DALL·E and CLIP: OpenAI's Multimodal Neural Networks

Two example prompts and resulting generated images from DALL·E

OpenAI’s new “multimodal” DALL·E and CLIP models combine text and images, and also mark the first time that the lab has presented two separate big pieces of work in conjunction. In a short blog post, which I’ll quote almost in full throughout this story because it also neatly introduces both networks, OpenAI’s chief scientist Ilya Sutskever explains why:

A long-term objective of artificial intelligence is to build “multimodal” neural networks—AI systems that learn about concepts in several modalities, primarily the textual and visual domains, in order to better understand the world. In our latest research announcements, we present two neural networks that bring us closer to this goal.

These two neural networks are DALL·E and CLIP. We’ll take a look at them one by one, starting with DALL·E.

The name DALL·E is a nod to Salvador Dalí, the surrealist artist known for that painting of melting clocks, and to WALL·E, the Pixar science-fiction romance about a waste-cleaning robot. It’s a bit silly to name an energy-hungry image generation AI after a movie in which lazy humans have fled a polluted Earth to float around in space and do nothing but consume content and food, but given how well the portmanteau works and how cute the WALL·E robots are, I probably would’ve done the same. Anyway, beyond what’s in a name, here’s Sutskever’s introduction of what DALL·E actually does:

The first neural network, DALL·E, can successfully turn text into an appropriate image for a wide range of concepts expressible in natural language. DALL·E uses the same approach used for GPT-3, in this case applied to text–image pairs represented as sequences of “tokens” from a certain alphabet.

DALL·E builds on two previous OpenAI models, combining GPT-3’s capability to perform different language tasks without finetuning with Image GPT’s capability to generate coherent image completions and samples. As input it takes a single stream — first text tokens for the prompt sentence, then image tokens for the image — of up to 1280 tokens, and learns to predict the next token given the previous ones. Text tokens take the form of byte-pair encodings of letters, and image tokens are patches from a 32 x 32 grid in the form of latent codes found using a variational autoencoder similar to VGVAE. This relatively simple architecture, combined with a large, carefully designed dataset, gives DALL·E the following laundry list of capabilities, each of which have interactive examples in OpenAI’s blog post:

- Controlling attributes

- Drawing multiple objects

- Visualizing perspective and three-dimensionality

- Visualizing internal and external structure (like asking for a macro or x-ray view!)

- Inferring contextual details

- Combining unrelated concepts

- Zero-shot visual reasoning

- Geographic and temporal knowledge

A lot of people from the community have written about DALL·E or played around with its interactive examples. Some of my favorites include:

- DeepMind researcher Felix’s Hill’s NonCompositional, a blog post on why DALL·E is good at composition without being very systematic (it can draw a hedgehog-shaped lettuce but not a green cube on a red cube on a blue cube )

- Károly Zsolnai-Fehér’s Two Minute Papers video on DALL·E, OpenAI’s previous work that led to it, and lots of generation examples

- Fun generations from Twitter and beyond: Janelle Shane’s dawnings of DALL-E; Oriol Vinyals’ soap dispenser in the shape of a glacier; and Karol Hausman’s snail made of a corkscrew.

I think DALL·E is the more interesting of the two models, but let’s also take a quick look at CLIP.

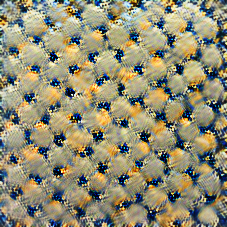

CLIP’s performance on different image classification benchmarks.

Sutskever:

CLIP has the ability to reliably perform a staggering set of visual recognition tasks. Given a set of categories expressed in language, CLIP can instantly classify an image as belonging to one of these categories in a “zero-shot” way, without the need to fine-tune on data specific to these categories, as is required with standard neural networks. Measured against the industry benchmark ImageNet, CLIP outscores the well-known ResNet-50 system and far surpasses ResNet in recognizing unusual images.

Instead of training on a specific benchmark like ImageNet or ObjectNet, CLIP pretrains on a large dataset of text and images scraped from the internet (so without specific human labels for each images). It performs a proxy training task: “given an image, predict which out of a set of 32,768 randomly sampled text snippets, was actually paired with it in our dataset.” To then do actual classification on a benchmark dataset, the labels are transformed to be more descriptive (e.g. a “cat” label becomes “a photo of a cat”), and CLIP calculates for each label how likely it is to be paired with the image. It predicts the most likely one to be the label. As you can see from the image above, this approach is highly effective across datasets. It’s also very efficient because, being a zero-shot model, CLIP doesn’t need to be (re)trained or finetuned for different datasets.

My favorite application so far of CLIP is by Travis Hoppe, who used it to visualize poems using Unsplash photos — worth a click! Another interesting one is actually how it’s used in combination with DALL·E: after DALL·E generates 512 plausible images for a prompt, CLIP ranks their quality, and only the 32 best ones are returned in the interactive viewer. Instead of researchers cherry-picking the best results to show in a paper, a different neural net can actually perform this task!